Baserow AI Security & Data Governance

Baserow’s AI architecture is built on the fundamental principles of security, sovereignty, flexibility and user control.

This whitepaper provides a transparent technical explanation of how Baserow protects your data across both hosting environments, detailing our encryption standards, data flow paths, and retention policies.

Section 1. Executive Summary

Artificial Intelligence has fundamentally changed how organizations manage data, offering the ability to transform natural language into complex formulas, summarize vast datasets, and automate insights instantly. However, the adoption of generative AI brings critical questions regarding data privacy, residency, and ownership.

At Baserow, we believe that using AI tools should never require sacrificing control over your data. Unlike rigid SaaS platforms that force all data through a central processing funnel, Baserow offers a dual-governance model designed for flexibility:

- For Cloud Users (SaaS): A secure, managed enterprise proxy that ensures GDPR compliance and ease of use.

- For Self-Hosted Users: Data travels directly from your infrastructure to the AI provider, ensuring Baserow never sees, logs, or processes your AI content.

Section 2. Shared Security Foundation

This section details the security measures and policies that apply to all Baserow users, regardless of deployment type.

Baserow’s AI architecture is designed to support two distinct operational models. Baserow allows you to configure the data path, the model provider, and the security boundary according to your organization’s specific compliance requirements.

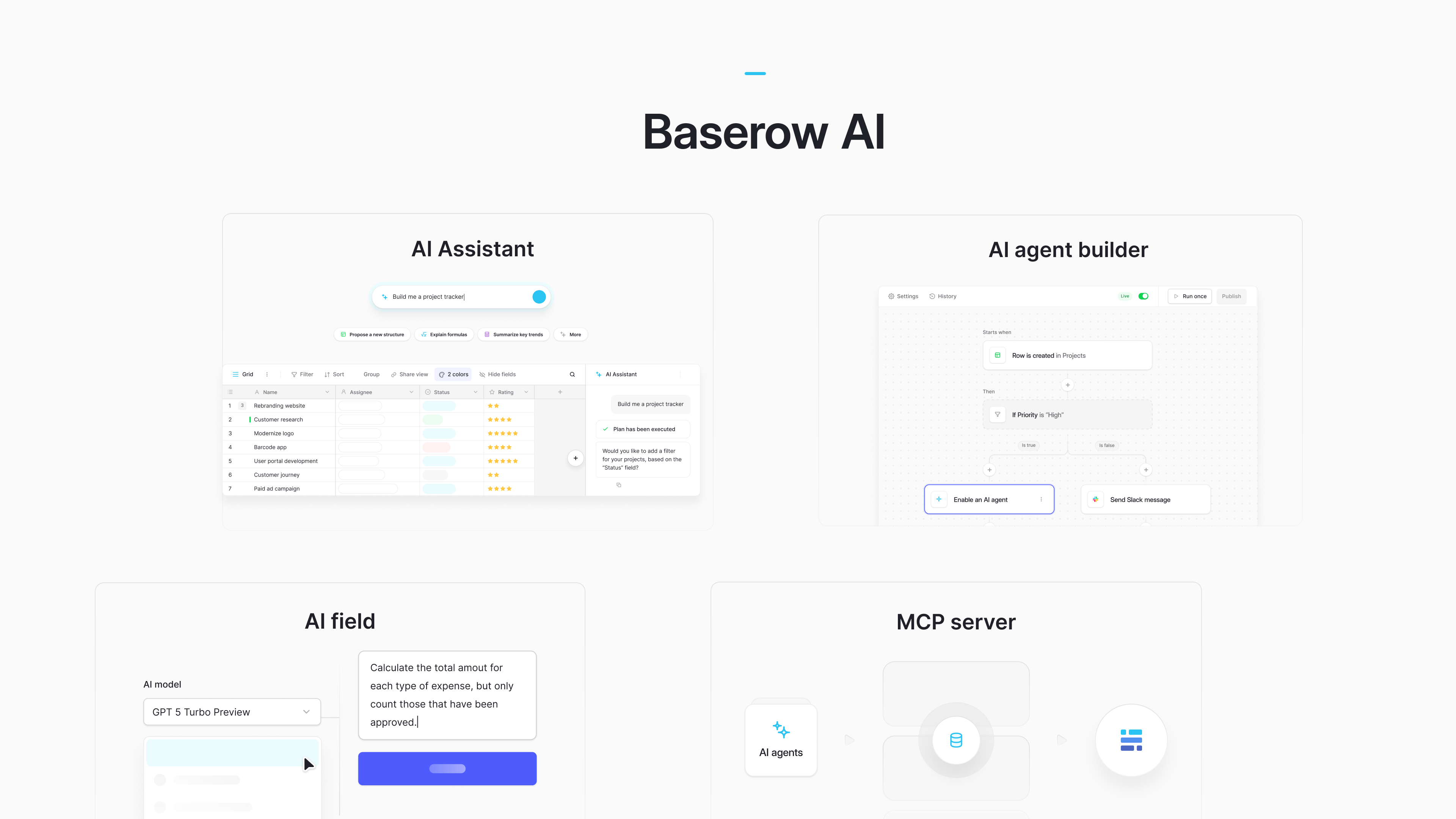

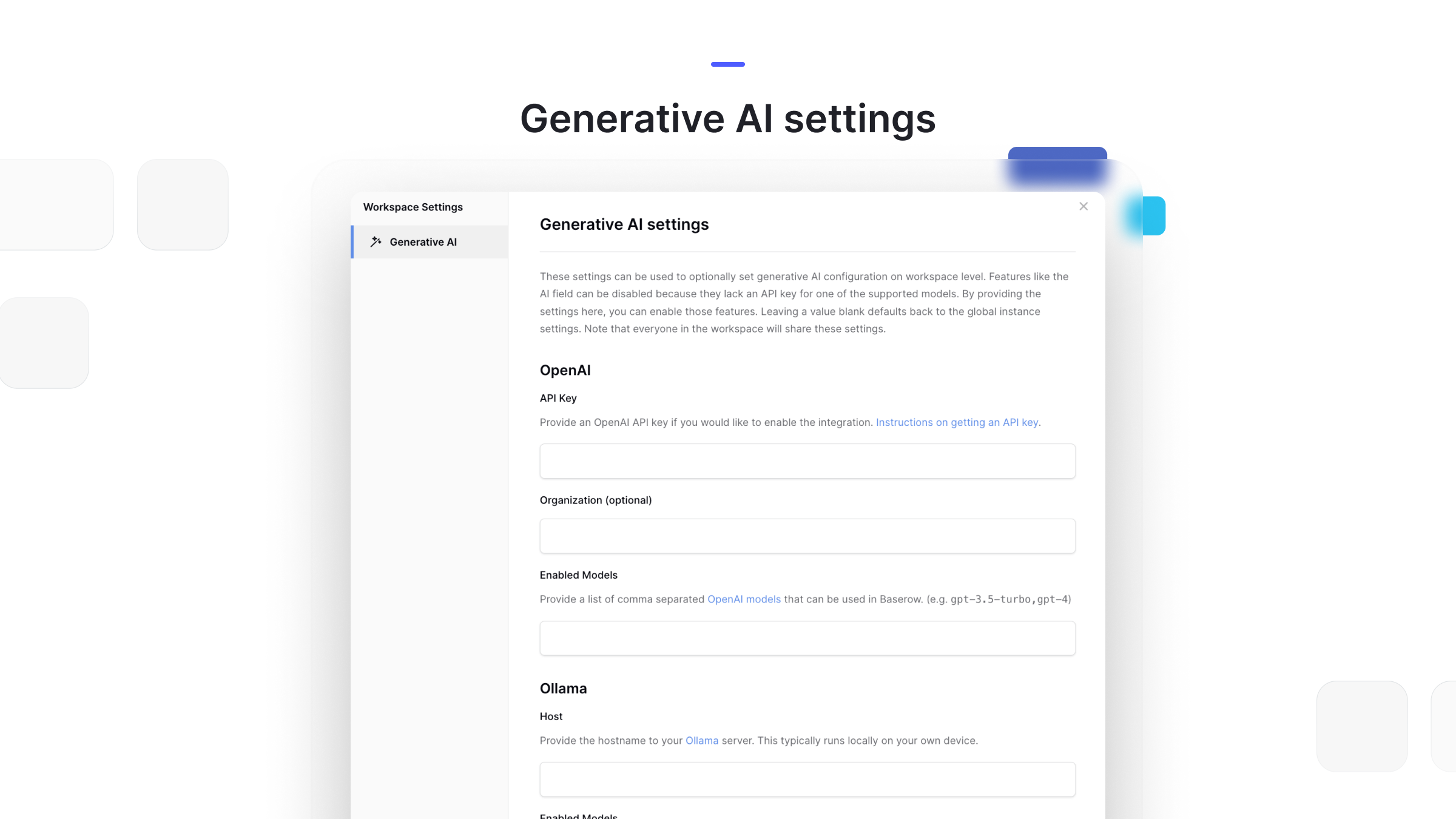

2.1 “Bring Your Own Key” (BYOK) Architecture

Central to our architecture is the Bring Your Own Key (BYOK) architecture. Rather than purchasing AI credits, Baserow allows you to connect your workspace directly to your preferred AI providers, including OpenAI, Anthropic, Mistral, OpenRouter, and Ollama.

This approach offers three critical security and operational benefits:

- Direct Contract: You are not subject to a secondary sub-processor agreement for AI data. Your data privacy terms exist directly between your organization and the AI provider (e.g., OpenAI Enterprise).

- Granular Governance (Instance vs. Workspace): Administrators can enforce global policies at the Instance Level (applying to the entire instance), while individual teams can override these for supported features at the Workspace Level to use specific models or API keys.

- Model Transparency: You control exactly which model version is being used, preventing unexpected changes in output quality.

Baserow allows you to connect specific models globally or per workspace, ensuring you control the data pipe.

2.2 API Key Management & Storage

Baserow offers flexible credential management to suit your security posture. Depending on your deployment model, you can manage API keys at two distinct levels:

- Workspace-Level Keys (Cloud & Self-Hosted): Users can enter keys directly into the Workspace settings to enable AI for specific teams. These keys are stored in your database to allow the application to authenticate requests on your behalf.

- Instance-Level Keys (Self-Hosted): For maximum security, self-hosted administrators can configure API keys globally using Environment Variables on the server. This method keeps keys out of the application database entirely, ensuring they are managed purely at the infrastructure level.

2.3 Encryption in Transit

Regardless of the deployment model, Baserow enforces strict standards to protect data integrity.

All data transmission, whether between the User and the Baserow Server, or the Baserow Server and the AI Provider, is encrypted using TLS/SSL (Transport Layer Security). This ensures that API keys, prompts, and generated formulas are protected against interception while traveling over the network.

2.4 No Training on Customer Data

A fundamental concern for any enterprise adopting AI is the risk of intellectual property leakage. Baserow provides a definitive stance on model training:

- Zero Training by Baserow: Baserow B.V. does not use customer data (prompts, outputs, or table content) to train, fine-tune, or improve our internal models or the models of our providers. Simply using the AI features does not grant us the right to learn from your data.

- Provider Isolation: When using our Cloud or Self-Hosted “Bring Your Own Key” models, your data is processed under the API usage policies of the respective provider (e.g., OpenAI, Anthropic). These enterprise API agreements typically guarantee that data sent via the API is not used to train their foundation models.

2.5 User Feedback & Model Improvement

Baserow believes that quality improvement should never come at the cost of data privacy.

We distinguish strictly between General Model Training and User-Initiated Feedback.

- Active Feedback Loop (Opt-In): Users can voluntarily help improve the accuracy of the AI assistant by using the Thumbs Up / Thumbs Down controls on specific AI outputs.

- Anonymization: If you choose to provide this feedback, Baserow collects only the specific prompt and response associated with that rating. These samples are stripped of user identifiers before being used for quality analysis.

- Purpose (Prompt Engineering): This feedback is used exclusively for Prompt Optimization, refining the system instructions and logical constraints we send to the LLM to ensure better formatting and reasoning in the future.

2.6 Ephemeral Processing

Your data is sent strictly for processing and is not cached or stored by Baserow’s AI layer.

- Ephemeral Processing: Inputs sent to the model provider are used solely to generate the response.

- Stateless Interaction: Once the response is returned to Baserow, the provider does not retain a long-term copy of your table data.

2.7 User Control & Review Workflows

Baserow is designed to ensure you remain the architect of your data. We distinguish between Interactive Workflows, where you review output, and Automated Workflows, where you verify logic.

-

Interactive Preview: When generating formulas or summarizing text manually, the output is presented as a preview. You must explicitly click “Create Field” or “Save” to commit the data.

-

On-Demand Execution: Generative content in tables executes upon your request. It triggers only when a user explicitly clicks the “Generate” button for a specific row, runs a batch action, or asks the AI assistant to "Build a table”.

-

Automated Workflows: For fully automated workflows (e.g., “When a form is submitted, summarize the request”), the human control lies in the configuration phase. You define the prompt and the trigger conditions upfront. The AI executes autonomously based on your pre-validated logic, ensuring speed without sacrificing predictability.

Control here lies in the Testing Phase. We recommend testing AI prompts on sample data before activating the automation to ensure the outputs are consistent and reliable.

2.8 Improving Accuracy via Model Selection

Unlike many SaaS competitors that lock users into a single one-size-fits-all model, Baserow empowers you to control the intelligence level of your assistant.

- Model Swapping: Baserow allows you to switch between models to balance cost, speed, and intelligence. Users are not locked into a single model version. You can configure specific models for specific tasks.

- Complex Reasoning: If you find that a standard model is struggling with complex reasoning or specific formula syntax, users can switch the specific field or workspace to a more capable models to instantly improve accuracy and reasoning capabilities.

- Routine Tasks: For simple summarization or translation, users can configure lighter, faster models to optimize for speed and cost.

- Specialized Models: Organizations can even connect to specialized or fine-tuned models relevant to their specific industry (e.g., medical or legal data) via compatible API endpoints.

- Temperature Control: Users can adjust the Temperature setting for each prompt directly in the UI. For tasks requiring strict logic (like formula generation), we recommend setting the Temperature setting to

0. For creative tasks (like brainstorming marketing copy), a higher setting (e.g.,0.7to1.0) allows for more variety.

Section 3. Cloud Environment (SaaS)

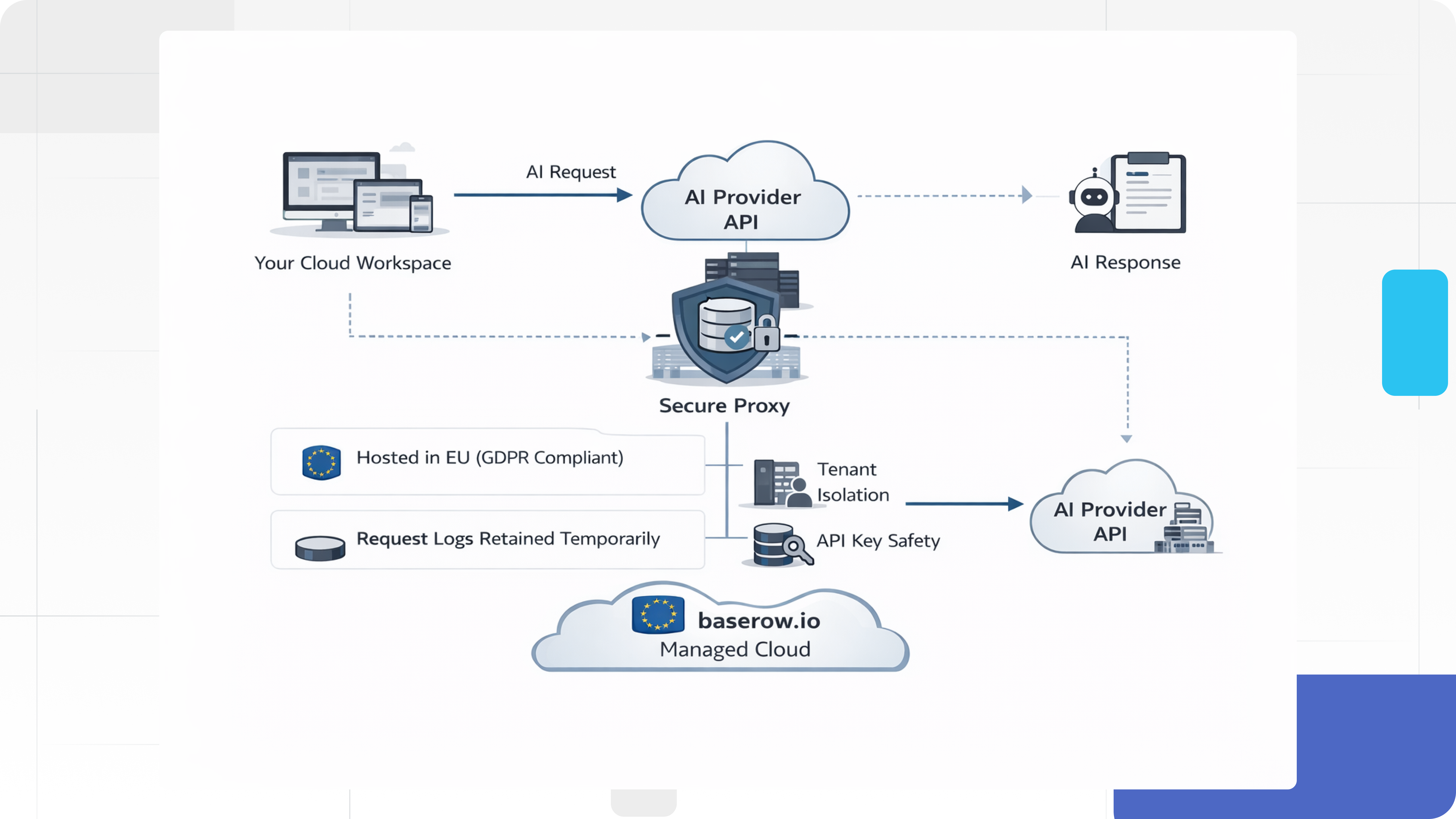

This section outlines the security architecture specific to the managed Baserow Cloud environment, detailing how our secure proxy handles tenant isolation, data residency, and system monitoring.

For users on baserow.io, we provide a managed, enterprise-grade proxy that ensures security without the overhead of server management.

3.1 Secure Proxy & Tenant Isolation

Cloud requests are routed through Baserow’s secure intermediary layer before reaching the model provider.

- Tenant Isolation: AI requests are authenticated at the start of every transaction. The AI service has no direct database access; it must request data via Baserow’s internal APIs, which enforce strict permission checks, ensuring User A’s context is never accessible to User B.

- Data Residency: Both Baserow’s core servers and our primary LLM processors are hosted in Europe (

eu-central-1zone), ensuring alignment with GDPR requirements. - API Key Safety: When you enter API keys into Baserow, they are stored securely in your database. For Cloud users, these keys are protected by our enterprise-grade infrastructure.

3.2 Retention within Baserow (Logs & History)

To support essential features like Undo/Redo and conversation history, Baserow temporarily stores prompt and output logs on Cloud (SaaS).

Logs are stored securely for 6 months to enable users to view their request history and provide feedback to answers. These logs are protected by Baserow’s strict tenant isolation protocols.

3.3 Usage Data & System Monitoring

To maintain service reliability and prevent platform abuse, Baserow collects specific metadata regarding feature usage. It is critical to distinguish between Operational Metrics and Content Logging.

A. Operational Metrics (Collected)

Baserow collects aggregated, non-content telemetry to monitor system health and billing usage. This includes:

- Volume Metrics: The number of AI requests made per workspace, token usage counts, and error rates (e.g., 500 Internal Server Errors).

- Feature Adoption: Boolean flags indicating which features are enabled (e.g., “AI Field Enabled: True”) to help our product teams understand feature popularity.

- Performance Data: Latency logs (e.g., “Request took 400ms”) to optimize API response times.

Note: This data is strictly numerical and structural. It does not contain the text of your prompts or the data in your rows.

B. Content Logging (Restricted)

Content refers to the specific text inputs (prompts), row data, and AI-generated outputs.

No Passive Logging: We do not silently log prompt content for general analytics or marketing research.

C. Abuse Monitoring & Exceptions

Automated systems may monitor usage volumes to prevent platform abuse (e.g., DDoS or spam generation).

As a Cloud provider, we reserve the right to retain temporary logs of prompt content only if triggered by automated security systems (e.g., detection of DDoS patterns or injection attacks). Access to these specific security logs is strictly limited to authorized personnel and is retained only for the duration of the investigation before permanent deletion.

Visualizes cloud requests routed through Baserow’s secure intermediary layer before reaching the model provider

Section 4. Self-Hosted Environment

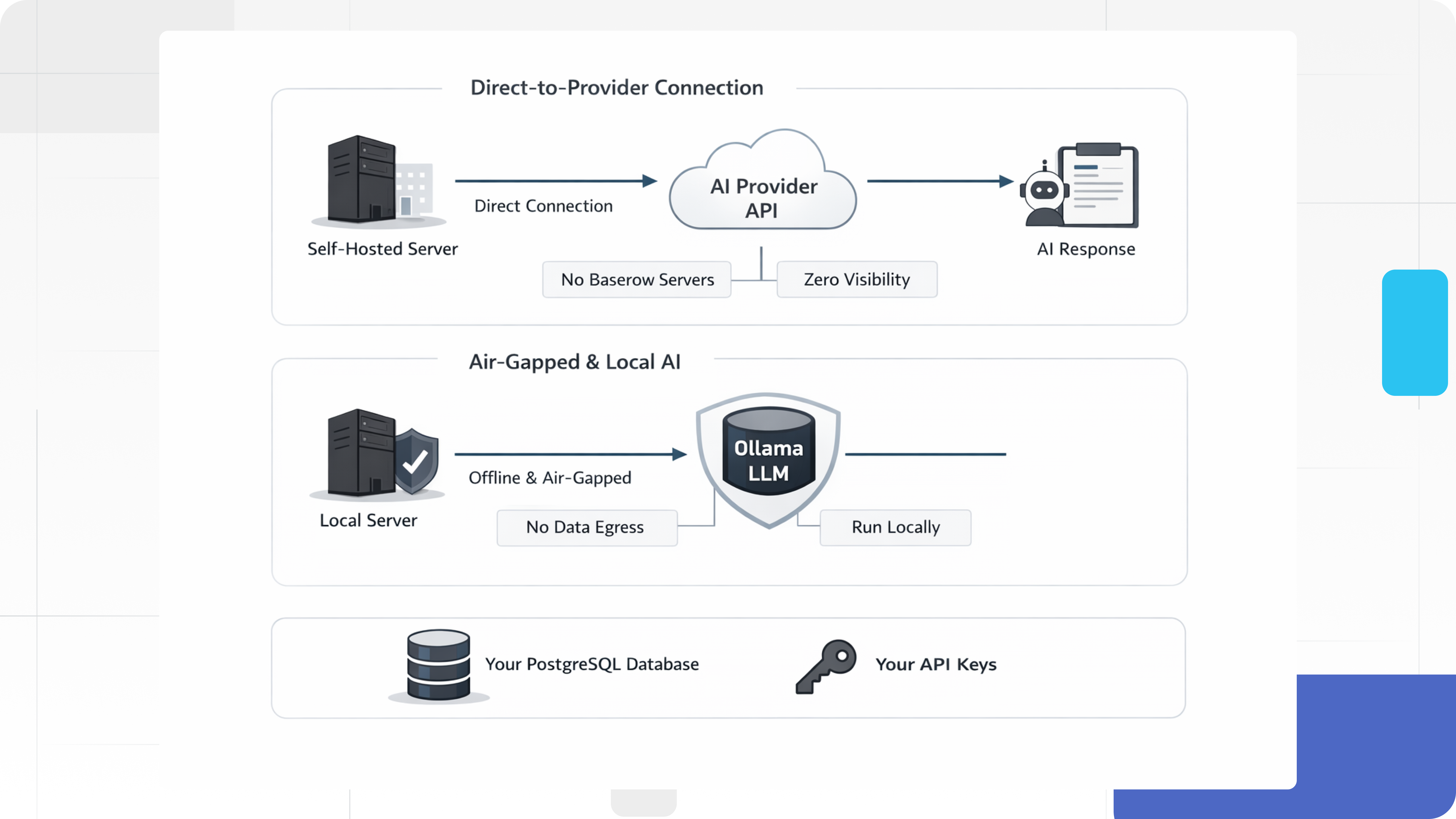

This section details the governance controls available exclusively to Self-Hosted Enterprise users, focusing on the Direct-to-Provider architecture, air-gapped capabilities, and infrastructure sovereignty.

For organizations with strict data sovereignty requirements (e.g., Healthcare, Finance, Government, Defence), Baserow offers a Bring Your Own Key (BYOK) architecture.

4.1 Direct-to-Provider Connection

The defining feature of Baserow’s Self-Hosted AI is the Direct-to-Provider Connection. In this configuration, Baserow removes itself from the data loop entirely.

When a user on a self-hosted instance triggers an AI action, the request is constructed locally on your infrastructure. The data packet travels directly from your server to the AI provider’s API endpoint via an encrypted channel.

- No Proxying: The data does not route through

api.baserow.ioor any Baserow-controlled cloud servers. - Zero Visibility: Because the traffic moves directly from your infrastructure (e.g., your AWS VPC or on-premise server) to the AI Provider, Baserow B.V. has no technical visibility into your prompts, data, or outputs. You retain full ownership of the API keys and the data flow.

- Firewall & Network Control: Outbound traffic is limited strictly to the domains of the providers you configure. If you do not configure an external provider, no outbound AI traffic is possible. Local LLM deployments do not require domain or port whitelisting, while external APIs require customers to identify and whitelist necessary domains.

4.2 Air-Gapped & Local AI (Ollama)

For organizations with the strictest data classifications, such as Defence, Healthcare, or Finance, Baserow supports Ollama, allowing you to run Large Language Models (LLMs) within your own local network.

- Zero Egress: When using Ollama, your data never leaves your infrastructure. The model runs on your own hardware (e.g.,

llama2,mistral), allowing for a completely offline AI stack. - Host Configuration: You provide the hostname of your local Ollama server in the instance settings. This typically runs locally on your own device.

4.3 Granular Retention Control (Logs & History)

Baserow Self-Hosted offers absolute sovereignty over log retention.

- Direct Database Access: Prompts and generated outputs are stored in your local PostgreSQL database. This gives your DevOps team the flexibility to implement custom retention policies via SQL maintenance jobs (e.g., purging logs every 30 days for strict compliance, or keeping them for 1 year for auditing).

- No Vendor Lock-in: Because the data resides on your infrastructure, Baserow B.V. cannot enforce arbitrary deletion schedules or retain data beyond your desired scope.

4.4 Abuse Monitoring

In a self-hosted environment, Baserow B.V. has zero access to your data. We cannot perform abuse monitoring or human review of your prompts because the traffic never touches our servers. Your internal compliance team retains full authority over auditing usage.

4.5 API Key Safety (Infrastructure Sovereignty)

For self-hosted instances, API keys are stored within your internal PostgreSQL database. You retain complete sovereignty over your credential storage.

Because Baserow does not impose a proprietary encryption layer that could complicate key rotation or recovery, the security of these keys is fully governed by your infrastructure controls. We recommend securing your database volume with Disk-Level Encryption (e.g., LUKS, AWS EBS Encryption) and restricting database access to authorized internal subnets only to ensure credentials remain secure.

Visualizes a user’s request originating in the internal network, passing through the internal Baserow Server, and connecting directly to the External AI Provider (or Local Ollama instance)

Section 5. Data Scope & Responsible Use

This section clarifies the technical limitations and specific data payloads for each AI tool, outlining how to balance the probabilistic nature of Large Language Models (LLMs) with Baserow’s deterministic controls.

At Baserow, we design AI tools to act as a capable co-pilot, not an autopilot. We believe that while AI should accelerate your workflow, the final decision and data integrity must always remain in your hands.

5.1 AI as a Probabilistic Tool

Generative AI models operate based on probability and statistical patterns, not absolute truth. While they are powerful engines for productivity, it is critical to understand their inherent characteristics:

- Hallucinations: Models may occasionally produce plausible-sounding but incorrect information. This is particularly relevant when generating complex formulas or summarizing technical data.

- Contextual Limits: The AI only knows what is explicitly provided in the payload. It does not know your entire business history unless that context is included in the specific input or row data.

5.2 Tool-Specific Data Context

To ensure responsible usage, it is critical to understand exactly what data is transmitted to the AI provider for each specific feature. Baserow minimizes data exposure by sending only the context required for the requested task.

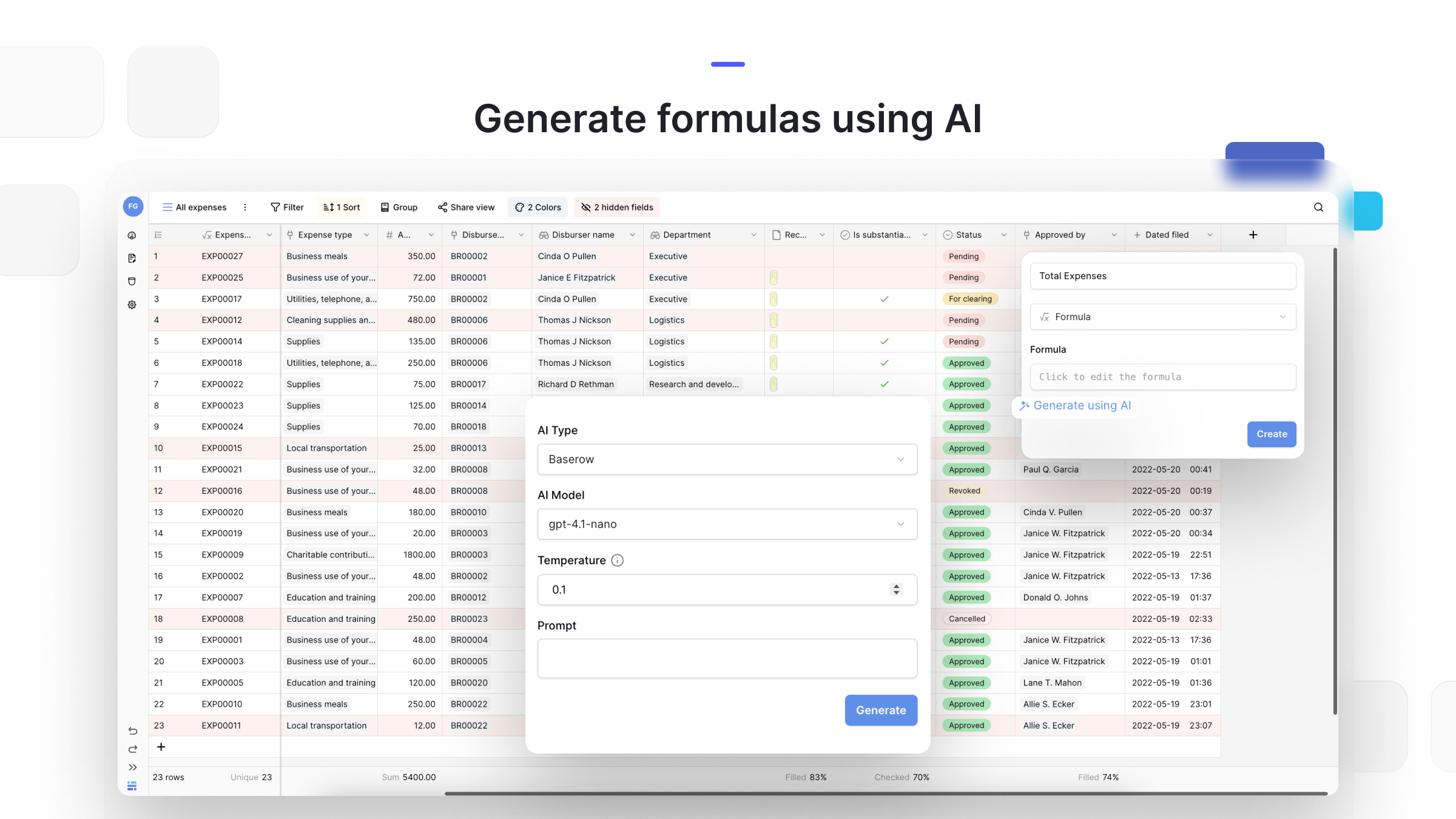

A. Formula Generation

- Purpose: Translating natural language into technical formula syntax (e.g., converting “calculate days between due date and today” into a Baserow formula).

- Data Payload:

- Prompt: The user’s text description of the desired logic.

- Schema Context: A list of column names and their field types (e.g., “Due Date (Date field)”, “Status (Single Select)”).

- No Row Data: Crucially, no actual cell data or row content is sent for formula generation. The AI only requires the structure of your table to write the syntax.

The Formula Generator offers a preview of the syntax before you apply it, ensuring logic is verified by a human.

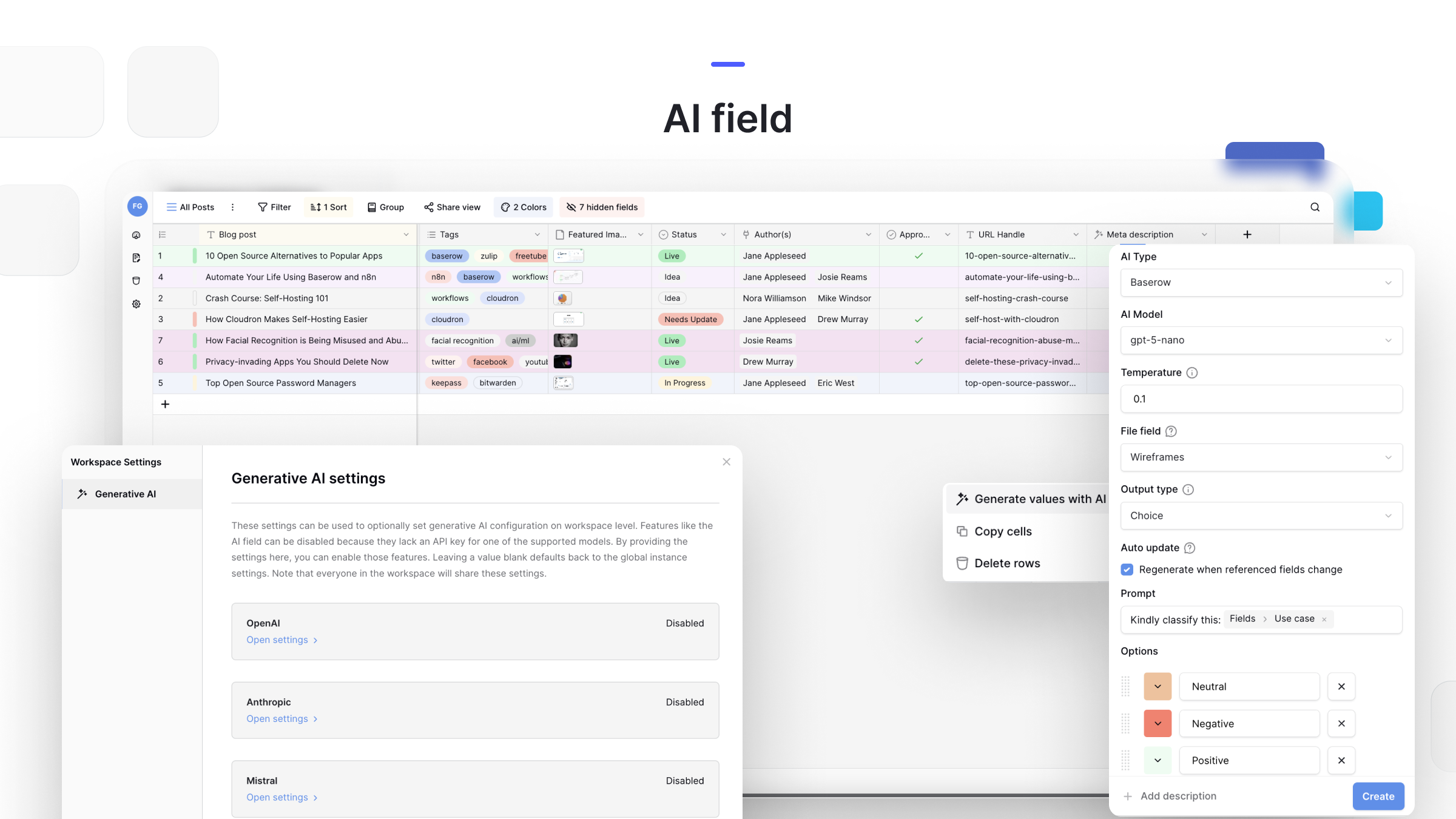

B. AI Field (Generative Content)

- Purpose: Generating new content (e.g., translation, summarization, or text generation) based on specific row inputs.

- Data Payload:

- Prompt: The specific instructions (e.g., “Summarize this feedback”).

- Targeted Row Data: Only the data from the specific columns referenced in the prompt is sent.

- Single Row Isolation: Processing occurs on a row-by-row basis. The AI does not see data from Row B while processing Row A, ensuring context isolation.

Configuring an AI Field. Note the ability to select specific models and define the prompt context for row-level operations.

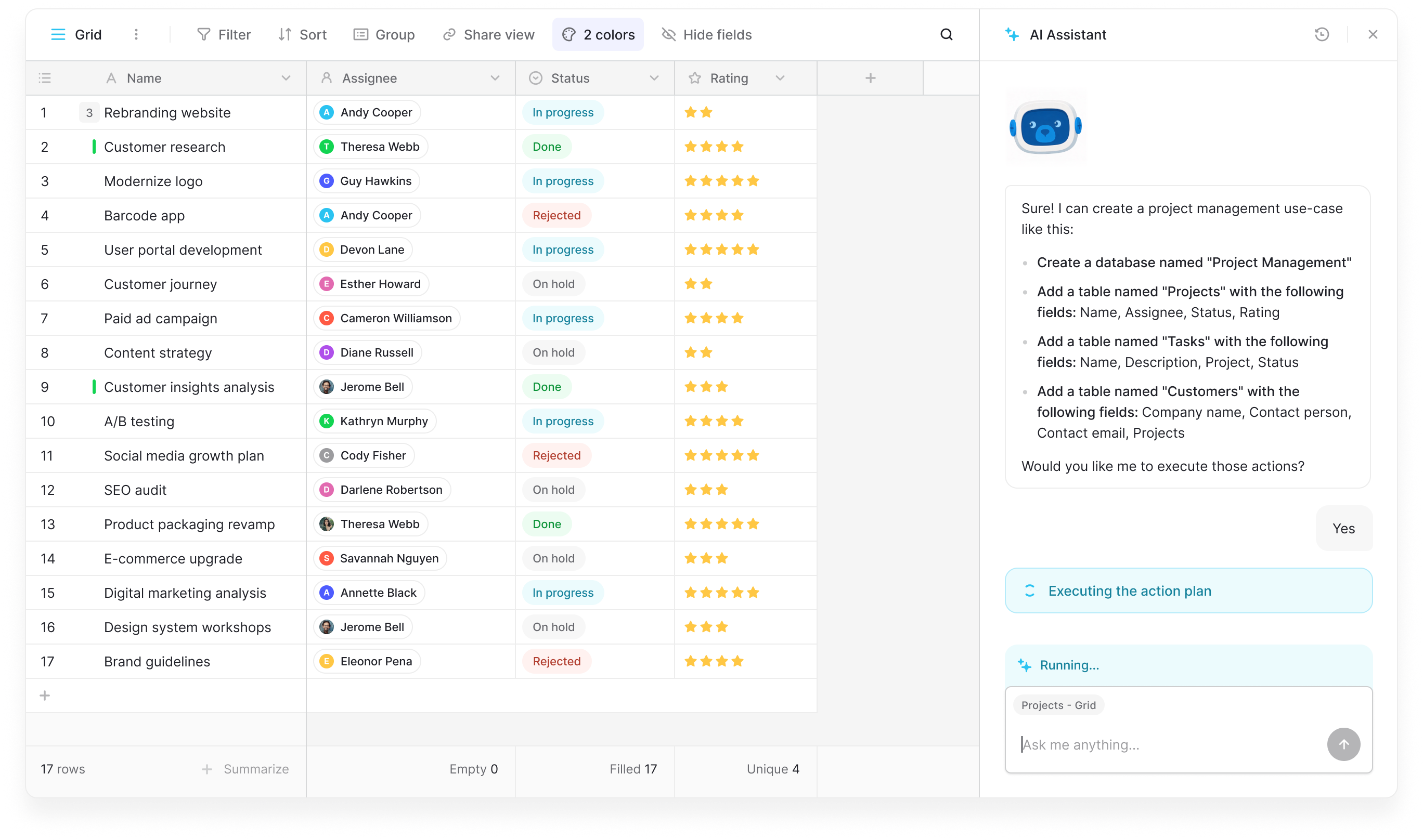

C. Table Analysis & Summarization (Kuma AI)

- Purpose: Answering broad questions about a dataset (e.g., “What are the top 3 recurring issues in this view?”).

- Data Payload:

- Full View Context: Unlike the row-by-row AI field, this tool analyzes the entire visible view.

- Hidden Columns: Warning: Data from hidden columns is included in the payload to ensure the AI has full context for accurate reasoning. Warning: Ensure that hidden columns do not contain sensitive PII (Personally Identifiable Information) that you do not wish to share with the AI provider.

- Batching Limits: To handle large datasets, the AI assistant retrieves data in batches (currently up to 100 rows per request). However, this is a batching limit, not a hard cap on total analysis. The tool can process multiple batches sequentially, provided the total dataset fits within the model’s context window. For extremely large tables exceeding the context window, we recommend filtering the view first to target specific subsets of data.

The AI Assistant analyzes your current view to provide high-level insights, summaries, and data cleaning suggestions.

5.3 Data Types & Content Filtering

- No File Analysis for Kuma AI assistant: Currently, file attachments (PDFs, Images) within cells are ignored and not sent to the AI for table analysis.

- There is an option in the AI field to reference a file field. If the user sets up an OpenAI model, they can select a file field to use together with the prompt. In that case, files will be sent, while in every other case they won’t be sent or analyzed.

- Raw Data Transmission: Baserow does not pre-process or redact data before sending it to the LLM. Data is sent exactly as it exists in the cell. Users should exercise caution and avoid including sensitive personal data (PII) in fields targeted for AI processing unless their internal compliance policies allow it.

- Bias and Safety: When using providers like OpenAI or Anthropic, built-in enterprise safety filters help prevent the generation of harmful, biased, or inappropriate content. We encourage users to review AI outputs for potential bias, especially when using AI to draft external communications or analyze qualitative human feedback.

Section 6. Use Cases, Best Practices & Limitations

This section provides strategic guidance on best practices for high-value AI workflows while establishing clear boundaries regarding high-risk decision-making and verification.

To get the most value out of Baserow AI while maintaining data integrity, we recommend adhering to these defined use cases and awareness of current limitations.

6.1 Recommended Use Cases

Baserow AI is optimized for productivity tasks that involve transforming, summarizing, or generating structured data based on your existing records.

- Formula Generation: Rapidly constructing complex formulas (e.g.,

ifstatements, date calculations) by describing the desired logic in plain English. This reduces the need to memorize syntax. - Content Summarization: Condensing long-form text (e.g., customer feedback, meeting notes) into concise summaries for easier reporting.

- Data Cleaning & Standardization: Analyzing inconsistent data entries and suggesting a standardized format (e.g., formatting phone numbers or normalizing addresses).

- Translation: Translating cell content into different languages for localization or cross-regional collaboration.

- Categorization/Tagging: Automatically assigning tags or categories to rows based on text analysis (e.g., tagging support tickets as “Urgent,” “Bug,” or “Feature Request”).

- Generative App Builder: Creating full database schemas from a prompt.

6.2 Limitations & Exclusions

Baserow AI is a productivity enhancer, not a substitute for professional judgment or critical infrastructure verification.

- Not for High-Risk Decision Making: Do not rely solely on AI outputs for critical decisions involving legal compliance, medical diagnosis, financial auditing, or safety-critical engineering calculations. Always verify these outputs manually.

- Contextual Limits: The AI operates strictly on the data provided in the specific prompt and selected row context. It does not see your entire database schema or cross-reference hidden tables unless explicitly configured to do so.

- Mathematical Precision: Large Language Models are text prediction engines, not calculators. While they can write formulas for you, asking them to perform direct arithmetic on large numbers within a text prompt can sometimes yield inaccuracies. We recommend using the AI to write the formula (which Baserow then executes mathematically) rather than asking the AI to calculate the answer directly.

- Real-Time Data: Unless connected to a model with web-browsing capabilities (which depends on your chosen provider), the AI does not have access to real-time external events (e.g., current stock prices or breaking news).

Section 7. Trust Resources & Provider Policies

This section provides verification resources, compliance documentation, and direct links to the commercial privacy policies of our AI partners.

As organizations accelerate their adoption of Generative AI, the need for robust security, transparency, and data sovereignty has never been greater. Baserow is committed to proving that you do not need to choose between powerful AI capabilities and strict data control.

By offering a dual-architecture model, secure Cloud SaaS for agility and Self-Hosted for absolute sovereignty, we ensure that the security boundary remains where it belongs: within your control. Whether you are generating complex formulas or analyzing sensitive datasets, our platform is designed to provide the utility of modern AI with the assurance that your data remains invisible to us and fully under your command.

We invite you to audit our security practices, review our open-source codebase, and deploy Baserow with the confidence that your AI strategy is built on a foundation of transparency.

7.1 Trust Resources

For deeper technical details on our security posture, compliance certifications, and data handling practices, please consult our official documentation:

- Baserow Security Portal: A comprehensive overview of our security standards, including GDPR compliance, penetration testing results, and ISO certifications.

- Developer Documentation: Technical guides on configuring Self-Hosted instances, managing environment variables for AI (BYOK), and setting up secure API connections.

- Open Source Repository: At Baserow, we believe in building in the open. As a fully open-source platform, our community, contributors, and developers have always been central to our growth. Security teams can audit by reviewing our source code on GitHub.

7.2 Provider-Specific Privacy Commitments

Baserow connects exclusively to the Enterprise/Commercial APIs of our model providers. Unlike consumer free-tier accounts, these commercial agreements explicitly prohibit the use of your data for model training.

We encourage security teams to verify these policies directly via the providers’ official trust portals:

- OpenAI Enterprise Privacy:

- Policy: “We do not train our models on your business data (data from ChatGPT Team, ChatGPT Enterprise, or our API Platform).” OpenAI Enterprise Privacy Portal

- Anthropic Commercial Terms:

- Policy: “By default, we will not use your inputs or outputs from our commercial products (e.g. Claude for Work, Anthropic API) to train our models.” Anthropic Commercial Privacy Center

- Mistral AI Commercial Terms:

- Policy: Commercial API data is processed with strict retention limits and is excluded from training pipelines under standard commercial terms. Mistral AI Legal & Privacy Hub

Still need help? If you’re looking for something else, please feel free to make recommendations or ask us questions; we’re ready to assist you.

- Ask the Baserow community

- Contact support for questions about Baserow or help with your account.